AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Rectlabel for object detection9/9/2023 Suppose your project contains a high number of large objects. It’s because their relative IoU is impacted less when they take up a large number of pixels than when they take up a smaller number in medium or small objects. Very large objects also tend to underperform. If an object is usually large, your model will perform worse in cases when the same type of object appears smaller. Variations in box size in your training data should be consistent. Two perfectly overlapping annotations have an IoU of 1.00. IoU tells you much of the total area of an object your predictions tend to cover. On a Raspberry Pi 4 with 8 GB Ram, FPS of around 10 was observed.Callout: Intersection over Union (IoU) is measured as the area of overlap between your model’s prediction and the ground truth, divided by their union.

The model worked fine with the code with modified detection part. The Colab notebook allows you to create a model for Edge TPU i.e. Running the Model with Coral USB Accelerator Observe how the parameters (boxes, class_ids, scores, count) are read differently for pre-trained and custom model in above code. Scores = get_output_tensor(interpreter, 0)īoxes = get_output_tensor(interpreter, 1)Ĭount = int(get_output_tensor(interpreter, 2))Ĭlass_ids = get_output_tensor(interpreter, 3) Scores = get_output_tensor(interpreter, 2)Ĭount = int(get_output_tensor(interpreter, 3)) def detect_objects(interpreter, image, score_threshold=0.3, top_k=6):īoxes = get_output_tensor(interpreter, 0)Ĭlass_ids = get_output_tensor(interpreter, 1) The code snippet performing this task is shown below. So, I modified the detection part of my code to adjust for the order of output details as per the custom model and it worked fine. The arrangement of the information in output details for a pre-trained model and custom model is shown below This order is different for pre-trained models.

I observed that the output details of the tensor are arranged in different order for the model created using Model Maker. So I checked the contents of output details of the tensor by simply printing it. During the initial attempts, the code was throwing an error. These details are stored in the output tensor and can be accessed by interpreter object. Running the model on Raspberry PiĪn object detection model returns four parameters as a result of inference. Please note that the order of labels provided during the training should be maintained while creating the label file.

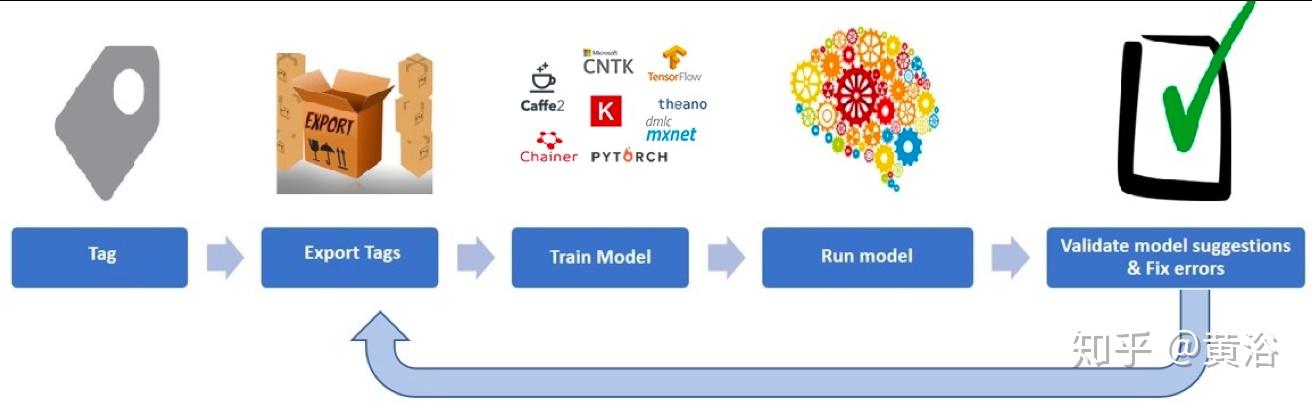

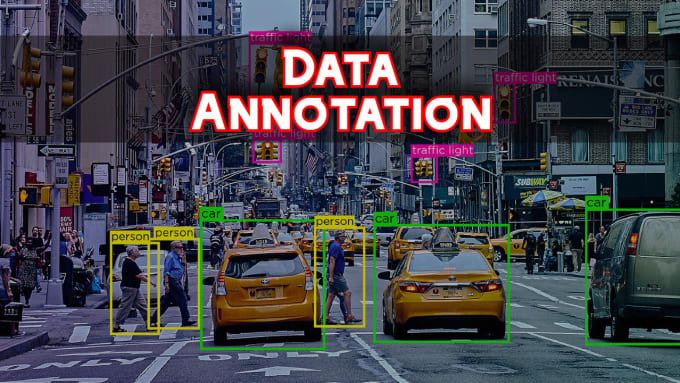

The label file created for this project is shown below:. I did this because 'tflite_support' library is not used for detection task in my project. However, in my case, I have created a label text file manually. This method does not require a separate label text file and uses the label information embedded in the model as metadata for this purpose. This code makes use of 'tflite_support' library to perform detection. Tensorflow Github page page provides a sample code for running an object detection model on Raspberry Pi. My dataset can be downloaded from this link. In this manner, I created 101 images for training and 15 images for validation. So I added these tags manually as they are required by the DataLoader function while loading the dataset in Colab notebook. The tags ' pose ' and ' truncated ' are not generated by this tool. The corresponding xml file generated by the tool containing the coordinate information of the objects is as shown. A sample image from my dataset with manully created bounding boxes around the objects of interest is shown below:. The tool creates a xml or csv file containing this information. Here, labeling means manually drawing the bounding boxes around an object in the image. This part requires you to take pictures of the objects and label them using a labeling tool. In this article, I will cover the unique aspects related to my project. I derived a notebook from the original notebook. You can save a copy of the original Colab notebook in your Google drive and personlise it by adding or modifying the information in it. These steps are well explained in the colab notebook. The steps involved in the custom model creation are as follows:. Working of this tool is demonstrated by a Colab notebook created by Tensorflow Team member Khanh LeVit. The process of training a custom model has been simplified to a great extent by the Tensorflow team with the introduction of Model Maker tool.

A snapshot of working of the model created for this project is shown below. In this case, you need to train a custom model for your use case. However, in some applications there is a need to detect certain objects which are not present in the pre-trained models. Most of them are trained using COCO dataset and can detect upto 90 types of common objects. For object detection, there are many pre-trained models available for Edge devices such as Raspberry Pi.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed